Sonification: Making Data Sound

Computers and music have been mingling their intimate secrets for over 50 years. These two worlds evolve in tandem and where they intersect they spawn practices that are entirely novel. One of these is “sonification,” turning raw data into sounds and sonic streams to discover new relationships within the data set by using a musical ear. This is similar to data visualization, a strategy that reveals new insights from data when it is made for the eye to perceive as graphs or animations. A key advantage with sonification is sound’s ability to present trends and details simultaneously at multiple time scales, allowing us to absorb and integrate this info the same way we listen to music.

This evening will include two events, a talk and a hands-on workshop. The talk will present an in-depth look into the potential and application of sonification. The workshop will offer participants an opportunity to work with their own data sets and explore sonification as a new approach to their data.

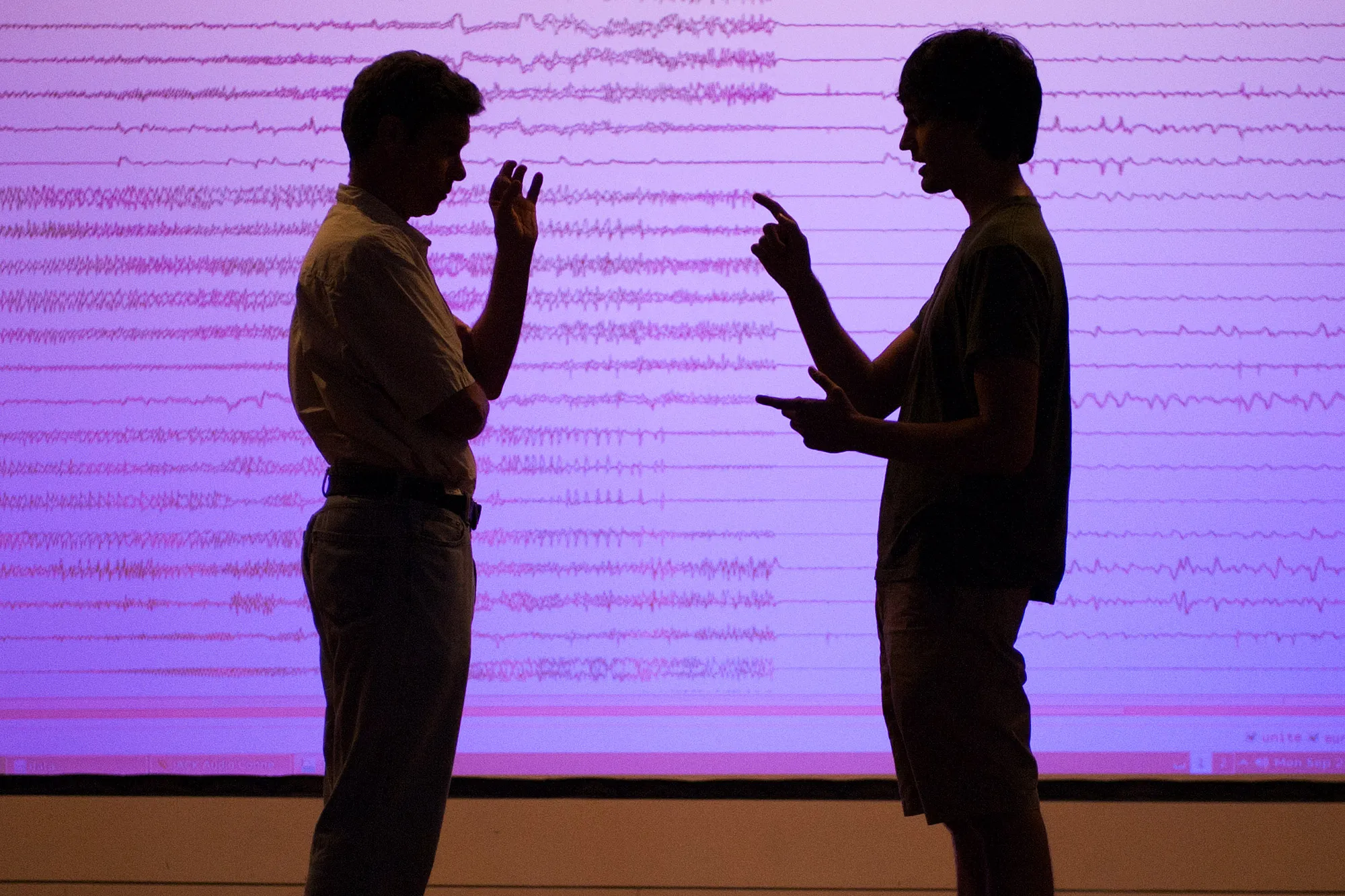

Chris Chafe is the director of Stanford University’s Center for Computer Research in Music and Acoustics (CCRMA) and approaches the practice of sonification from a background in computer-generated musical composition, using algorithms in the sculpting of musical detail. In much the same way, sonification uses datasets to generate sounds that can lead to a different or deeper understanding of patterns and processes in the sonified data. From global economic trends, atmospheric CO2 changes, or seemingly mundane events such as the ripening of fruit, sonification provides a means of gaining new perspectives on data through listening.

Main Image: Chris Chafe. Photo: Linda A. Cicero / Stanford University News Service.

Dates + Tickets

Season

EMPAC 2018–19 presentations, residencies, and commissions are supported by Rensselaer Polytechnic Institute, the National Endowment for the Arts, and the Jaffe Fund for Experimental Media and Performing Arts.